In my earlier post, I explained the fundamental shift from traditional search to generative AI search. Traditional search finds existing content. Generative AI creates new responses.

If you’ve been hearing recommendations about “AI-ready content” like chunk-sized content, conversational language, Q&A formats, and structured writing, these probably sound familiar. As instructional designers and content developers, we’ve used most of these approaches for years. We chunk content for better learning, write conversationally to engage readers, and use metadata for reporting and semantic web purposes.

Today, I want to examine how this shift starts at the very beginning: when systems index and process content.

What is Indexing?

Indexing is how search systems break down and organize content to make it searchable. Traditional search creates keyword indexes, while AI search creates vector embeddings and knowledge graphs from semantic chunks. The move from keywords to chunks signifies one of the most significant changes in how search technology works.

Let’s trace how both systems process the same content using three sample documents from my previous post:

Document 1: “Upgrading your computer's hard drive to a solid-state drive (SSD) can dramatically improve performance. SSDs provide faster boot times and quicker file access compared to traditional drives.“

Document 2: “Slow computer performance is often caused by too many programs running simultaneously. Close unnecessary background programs and disable startup applications to fix speed issues.“

Document 3: “Regular computer maintenance prevents performance problems. Clean temporary files, update software, and run system diagnostics to keep your computer running efficiently.“

User query: “How to make my computer faster?“

How does traditional search index content?

Traditional search follows three mechanical steps:

Step 1: Tokenization

This step breaks raw text into individual words. The three docs after tokenization look like this:

DOC1 → Tokenization → ["Upgrading", "your", "computer's", "hard", "drive", "to", "a", "solid-state", "drive", "SSD", "can", "dramatically", "improve", "performance", "SSDs", "provide", "faster", "boot", "times", "and", "quicker", "file", "access", "compared", "to", "traditional", "drives"]

DOC2 → Tokenization → ["Slow", "computer", "performance", "is", "often", "caused", "by", "too", "many", "programs", "running", "simultaneously", "Close", "unnecessary", "background", "programs", "and", "disable", "startup", "applications", "to", "fix", "speed", "issues"]

DOC3 → Tokenization → ["Regular", "computer", "maintenance", "prevents", "performance", "problems", "Clean", "temporary", "files", "update", "software", "and", "run", "system", "diagnostics", "to", "keep", "your", "computer", "running", "efficiently"]Step 2: Stop Word Removal & Stemming

What are Stop Words?

Stop words are common words that appear frequently in text but carry little meaningful information for search purposes. They’re typically removed during text preprocessing to focus on content-bearing words.

Common English stop words:

a, an, the, is, are, was, were, be, been, being, have, has, had, do, does, did, will, would, could, should, may, might, can, of, in, on, at, by, for, with, to, from, up, down, into, over, under, and, or, but, not, no, yes, this, that, these, those, here, there, when, where, why, how, what, who, which, your, my, our, theirWhat is Stemming?

Stemming is the process of reducing words to their root form by removing suffixes, prefixes, and other word endings. The goal is to treat different forms of the same word as identical for search purposes.

Some stemming Examples:

Original Word → Stemmed Form

"running" → "run"

"runs" → "run"

"runner" → "run"

"performance" → "perform"

"performed" → "perform"

"performing" → "perform"The three sample documents after stop words removal and stemming look like this:

DOC1 Terms: ["upgrad", "comput", "hard", "driv", "solid", "stat", "ssd", "dramat", "improv", "perform", "ssd", "provid", "fast", "boot", "time", "quick", "file", "access", "compar", "tradit", "driv"]

DOC2 Terms: ["slow", "comput", "perform", "caus", "program", "run", "simultan", "clos", "unnecessari", "background", "program", "disabl", "startup", "applic", "fix", "speed", "issu"]

DOC3 Terms: ["regular", "comput", "maintain", "prevent", "perform", "problem", "clean", "temporari", "file", "updat", "softwar", "run", "system", "diagnost", "keep", "comput", "run", "effici"]Step 3: Inverted Index Construction

What is inverted index?

An inverted index is like a book’s index, but instead of mapping topics to page numbers, it maps each unique word to all the documents that contain it. It’s called “inverted” because instead of going from documents to words, it goes from words to documents.

Note: For clarity and space, I’m showing only a representative subset that demonstrates key patterns.

The complete inverted index would contain entries for all ~28 unique terms from our processed documents. The key patterns include:

- Terms appearing in all documents (common terms like “comput”)

- Terms unique to one document (distinctive terms like “ssd”)

- Terms with varying frequencies (like “program” with tf=2)

INVERTED INDEX:

"comput" → {DOC1: tf=1, DOC2: tf=1, DOC3: tf=1}

"perform" → {DOC1: tf=1, DOC2: tf=1, DOC3: tf=1}

"fast" → {DOC1: tf=1}

"speed" → {DOC2: tf=1}

"ssd" → {DOC1: tf=1}

"program" → {DOC2: tf=2}

"maintain" → {DOC3: tf=1}

"slow" → {DOC2: tf=1}

"improv" → {DOC1: tf=1}

"fix" → {DOC2: tf=1}

"clean" → {DOC3: tf=1}The result: An inverted index that maps each word to the documents containing it, along with frequency counts.

Why inverted indexing matters for content creators:

Traditional search relies on keyword matching. This is why SEO focused on keyword density and exact phrase matching.

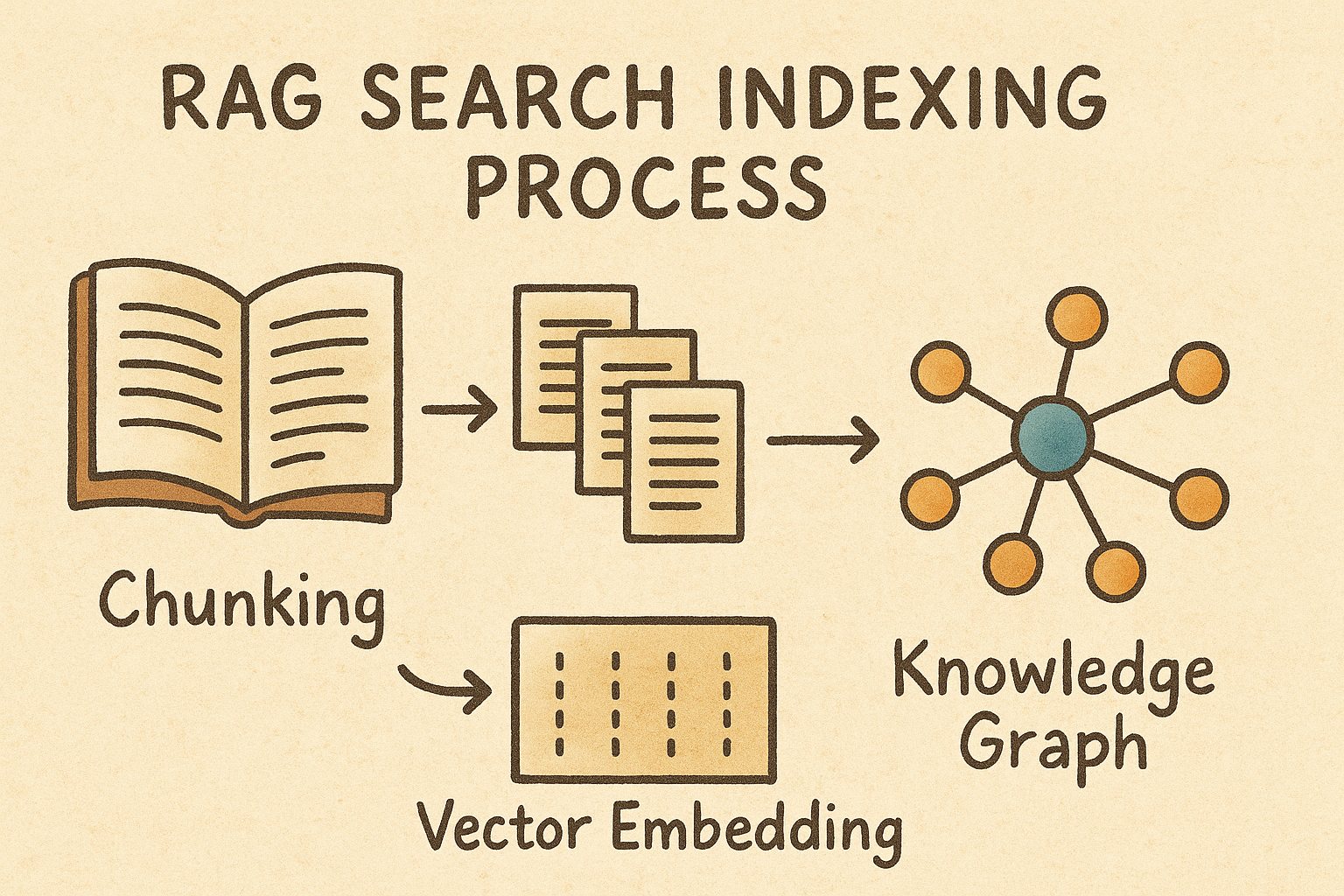

How do AI systems index content?

AI systems take a fundamentally different approach:

Step 1: Semantic chunking

AI doesn’t break content into words. Instead, it creates meaningful, self-contained chunks. AI systems analyze content for topic boundaries, logical sections, and complete thoughts to determine where to split content. They look for natural break points that preserve context and meaning.

What AI Systems Look For When Chunking

1. Semantic Coherence

- Topic consistency: Does this section maintain the same subject matter?

- Conceptual relationships: Are these sentences talking about related ideas?

- Context dependency: Do these sentences need each other to make sense?

2. Structural Signals

- HTML tags: Headings (H1, H2, H3), paragraphs, lists, sections

- Formatting cues: Line breaks, bullet points, numbered steps

- Visual hierarchy: How content is organized on the page

3. Linguistic Patterns

- Transition words: “However,” “Therefore,” “Next,” “Additionally”

- Pronoun references: “It,” “This,” “These” that refer to previous concepts

- Discourse markers: Words that signal topic shifts or continuations

4. Completeness of Information

- Self-contained units: Can this chunk answer a question independently?

- Context sufficiency: Does the chunk have enough background to be understood?

- Action completeness: For instructions, does it contain a complete process?

5. Optimal Size Constraints

- Token limits: Most AI models have processing windows (512, 1024, 4096 tokens)

- Embedding efficiency: Chunks need to be small enough for accurate vector representation

- Memory constraints: Balance between context preservation and processing speed

6. Content Type Recognition

- Question-answer pairs: Natural chunk boundaries

- Step-by-step instructions: Each step or related steps become chunks

- Examples and explanations: Keep examples with their explanations

- Lists and enumerations: Group related list items

For demonstration purposes, I’m breaking our sample documents by sentences, though real AI systems use more sophisticated semantic analysis:

DOC1 → Chunk 1A: "Upgrading your computer's hard drive to a solid-state drive (SSD) can dramatically improve performance."

DOC1 → Chunk 1B: "SSDs provide faster boot times and quicker file access compared to traditional drives."

DOC2 → Chunk 2A: "Slow computer performance is often caused by too many programs running simultaneously."

DOC2 → Chunk 2B: "Close unnecessary background programs and disable startup applications to fix speed issues."

DOC3 → Chunk 3A: "Regular computer maintenance prevents performance problems."

DOC3 → Chunk 3B: "Clean temporary files, update software, and run system diagnostics to keep your computer running efficiently."Step 2: Vector embedding

Vector embeddings are created using pre-trained transformer neural networks like BERT, RoBERTa, or Sentence-BERT. These models have already learned semantic relationships from massive text datasets. Chunks are tokenized first, then passed through the pre-trained models. After that, each chunk becomes a mathematical representation of meaning.

Chunk 1A → Embedding: [0.23, -0.45, 0.78, ..., 0.67] (768 dims)

Semantic Concepts: Hardware upgrade, SSD technology, performance improvement

Chunk 1B → Embedding: [0.18, -0.32, 0.81, ..., 0.71] (768 dims)

Semantic Concepts: Speed benefits, boot performance, storage comparison

Chunk 2A → Embedding: [-0.12, 0.67, 0.34, ..., 0.23] (768 dims)

Semantic Concepts: Performance issues, software conflicts, resource problems

Chunk 2B → Embedding: [-0.08, 0.71, 0.29, ..., 0.31] (768 dims)

Semantic Concepts: Software optimization, process management, troubleshooting

Chunk 3A → Embedding: [0.45, 0.12, -0.23, ..., 0.56] (768 dims)

Semantic Concepts: Preventive care, maintenance philosophy, problem prevention

Chunk 3B → Embedding: [0.41, 0.18, -0.19, ..., 0.61] (768 dims)

Semantic Concepts: Maintenance tasks, system care, routine optimizationStep 3: Knowledge graph construction

What is a Knowledge Graph?

A knowledge graph is a structured way to represent information as a network of connected entities and their relationships. Think of it like a map that shows how different concepts relate to each other. For example, it captures that “SSD improves performance” or “too many programs cause slowness.” This explicit relationship mapping helps AI systems understand not just what words appear together, but how concepts actually connect and influence each other.

How is knowledge graph constructed?

The system analyzes each text chunk to identify: (1) Entities – the important “things” mentioned (like Computer, SSD, Performance), (2) Relationships – how these things connect to each other (like “SSD improves Performance”), and (3) Entity Types – what category each entity belongs to (Hardware, Software, Metric, Process). These extracted elements are then linked together to form a web of knowledge that captures the logical structure of the information.

CHUNK-LEVEL RELATIONSHIPS:

Chunk 1A:

[Computer] --HAS_COMPONENT--> [Hard Drive]

[Hard Drive] --CAN_BE_UPGRADED_TO--> [SSD]

[SSD Upgrade] --CAUSES--> [Performance Improvement]

Chunk 1B:

[SSD] --PROVIDES--> [Faster Boot Times]

[SSD] --PROVIDES--> [Quicker File Access]

[SSD] --COMPARED_TO--> [Traditional Drives]

[SSD] --SUPERIOR_IN--> [Speed Performance]

Chunk 2A:

[Too Many Programs] --CAUSES--> [Slow Performance]

[Programs] --RUNNING--> [Simultaneously]

[Multiple Programs] --CONFLICTS_WITH--> [System Resources]

Chunk 2B:

[Close Programs] --FIXES--> [Speed Issues]

[Disable Startup Apps] --IMPROVES--> [Boot Performance]

[Background Programs] --SHOULD_BE--> [Closed]

Chunk 3A:

[Regular Maintenance] --PREVENTS--> [Performance Problems]

[Maintenance] --IS_TYPE_OF--> [Preventive Action]

Chunk 3B:

[Clean Temp Files] --IMPROVES--> [Efficiency]

[Update Software] --MAINTAINS--> [Performance]

[System Diagnostics] --IDENTIFIES--> [Issues]Consolidated knowledge graph

COMPUTER PERFORMANCE

│

┌──────────────┼──────────────┐

│ │ │

HARDWARE SOLUTIONS SOFTWARE SOLUTIONS MAINTENANCE SOLUTIONS

│ │ │

┌───────┴───────┐ ┌┴──────────┐ ┌┴─────────────┐

│ │ │ │ │ │

[Hard Drive] → [SSD] [Programs] → [Management] [Regular] → [Tasks]

│ │ │ │ │ │

▼ ▼ ▼ ▼ ▼ ▼

[Boot Times] [File Access] [Close] [Disable] [Clean] [Update]

│ │ │ │ │ │

└───────────────┼─────┴───────────┼───┴─────────────┘

▼ ▼

PERFORMANCE IMPROVEMENTHow knowledge graph works with vector embeddings?

Vector embeddings and knowledge graphs work together as complementary approaches. Vector embeddings capture implicit semantic similarities (chunks about “SSD benefits” and “computer speed” have similar vectors even without shared keywords), while knowledge graphs capture explicit logical relationships (SSD → improves → Performance). During search, vector similarity finds semantically related content, and the knowledge graph provides reasoning paths to discover connected concepts and comprehensive answers. This combination enables both fuzzy semantic matching and precise logical reasoning.

Why AI indexing drives the chunk-sized and structured content recommendation?

When AI systems chunk content, they look for topic boundaries, complete thoughts, and logical sections. They analyze content for natural break points that preserve context and meaning. AI systems perform better when content is already organized into self-contained, meaningful units.

When you structure content with clear section breaks and complete thoughts, you do the chunking work for the AI. This ensures related information stays together and context isn’t lost during the indexing process.

What’s coming up next?

In the next blogpost of this series, I’ll dive into how generative AI and RAG-powered search reshape the way systems interpret user queries, as opposed to the traditional keyword-focused methods. Our current post showed that AI indexes content by meaning, through chunking, vector embeddings, and building concept networks. It’s equally important to highlight how AI understands what users actually mean when they search.